Architecting SoCs for Success at ‘The Edge’

This article is based on a presentation by Paul Martin, Head of the Sondrel Architecture Team, who was invited to speak at an ARM® seminar in Stockholm earlier this year. Sondrel holds an accreditation as an Arm® Approved Design Partner.

Introduction

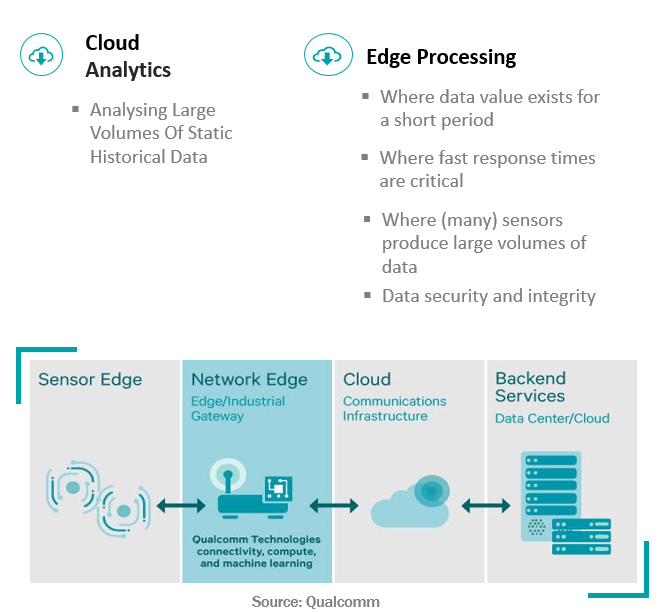

An observable trend over the last three years is that the ‘Internet of Things’ hasn’t quite panned out the way it was envisaged, with simple sensor nodes and devices at the edge of a network sending data to the cloud for processing on high performance servers.

It’s widely acknowledged that there is a need for increased compute performance at the edge of the network close to the sensors. Cloud based processing is good for processing and analysing large volumes of static, historical data but the latency and cost of transferring data means that it makes more sense to filter this data, or where time is critical, process it in real-time, at the edge.

An example of this is for ADAS and autonomous vehicles. Reliance on the cloud would not be suitable for safety critical functions as existing and proposed communications networks are simply not reliable enough.

Trends also indicate the requirement for analytics at the edge. These solutions use artificial intelligence (AI) technologies to support applications like object, hazard and facial recognition. A recent Sondrel engineered design that, as an example, has the compute power to support real-time video analytics using convolutional neural network IP and sits inside each remote camera module in a security system.

A picture is emerging where we will see a hybrid of cloud and edge computing to most efficiently, affordably and reliably support edge technology solutions across all industry verticals, with more devices incorporating AI capabilities.

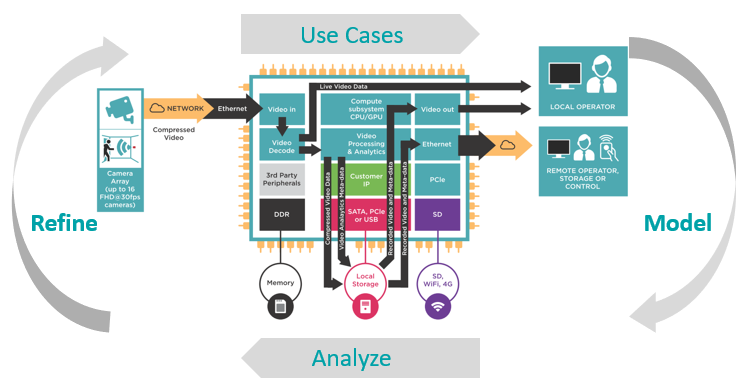

An Edge Design Example

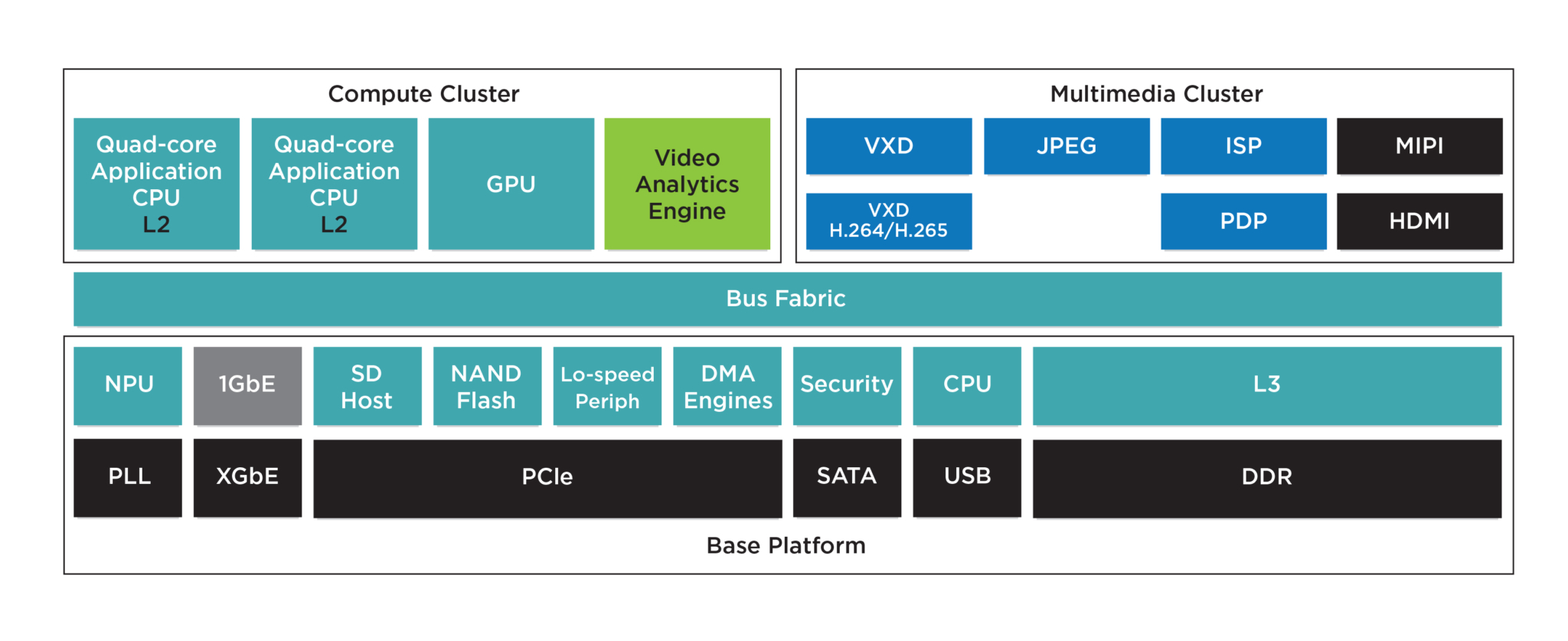

The Sondrel reference design shown in the diagram below is an example of an ‘edge’ computing device (ECD). A reference design is not a fully formed SoC in itself, but many subsystems that can be reused across many applications. Re-use is key to keeping development time and costs down for a product roadmap.

It can be configured to support micro-servers, video analytics and some data centre applications. As well as heterogeneous compute, it has a lot of high bandwidth, high connectivity and support for local storage.

So how to do we set about designing a SoC of similar complexity for our clients?

Architecture

We invest heavily in ensuring we have the right SoC architecture to deliver the desired functionality and performance, within time to market and cost constraints. Inevitably this means compromise.

Process

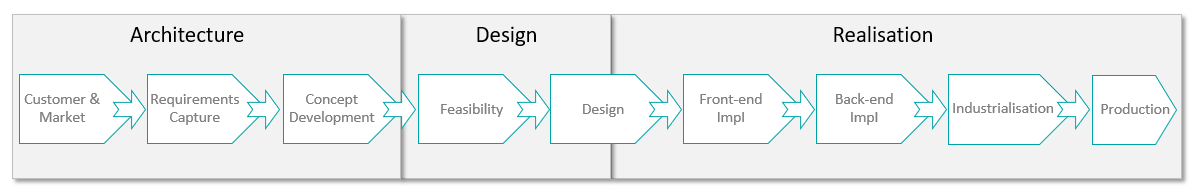

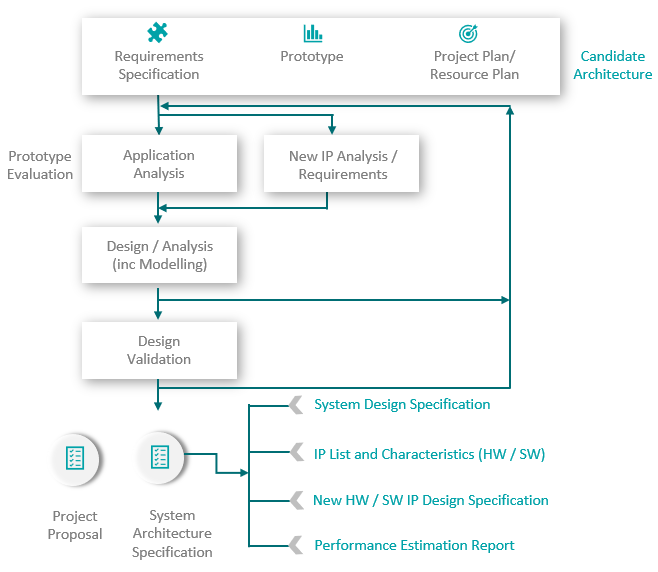

We follow a sequence of steps to understand the purpose of the device, what customer requirements are, which are feasible and which are not within the cost and time constraints.

We continue qualifying, quantifying and prioritising iteratively until we arrive at an architectural specification that provides a realistic schedule and cost – an RFQ Response. This process is often a project in itself – an architectural study.

We continue qualifying, quantifying and prioritising iteratively until we arrive at an architectural specification that provides a realistic schedule and cost – an RFQ Response. This process is often a project in itself – an architectural study.

Requirements Capture

The first step in the architecture study phase is requirements capture.

This starts with a client’s high-level market requirement, where they may not have a clear idea how to specify the SoC sufficiently so that chip designers can work from it.

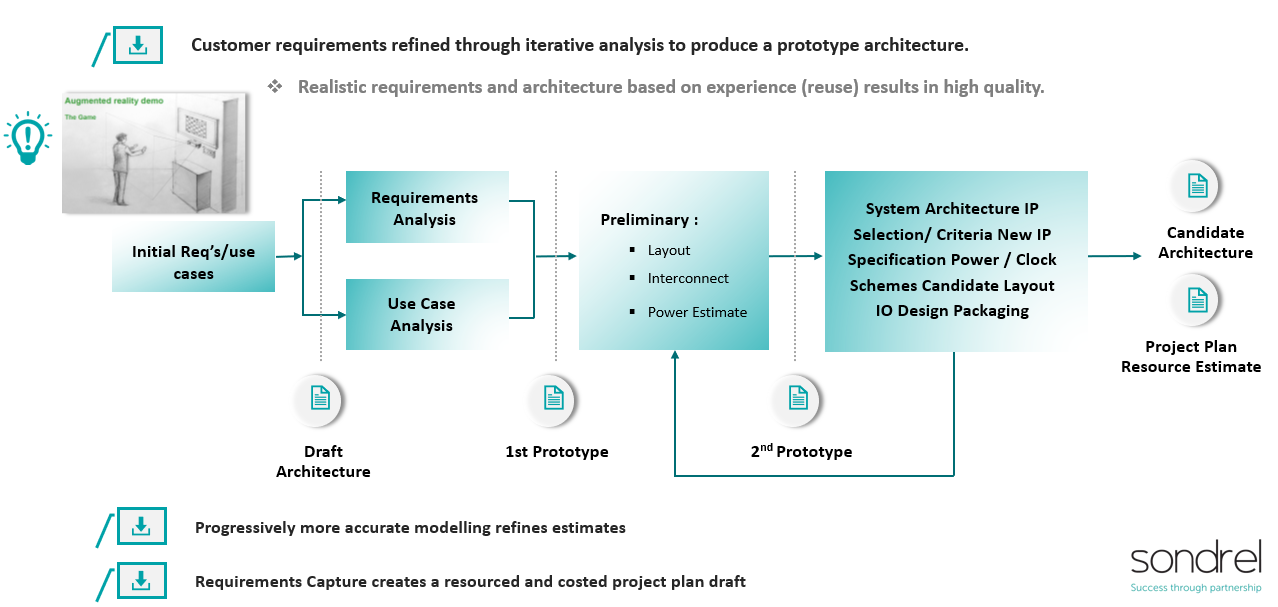

You can see an example below where the customer has produced an artist’s sketch to illustrate the use case they want to support. The customer wants to enable users to interact with a screen using gesture recognition.

This is refined into a set of environmental and system constraints, such as power functionality and interface requirements, along with a detailed set of use cases. From this we can develop and refine a prototype architecture that is allocated and mapped onto software and hardware components required to deliver the use case.

This is refined into a set of environmental and system constraints, such as power functionality and interface requirements, along with a detailed set of use cases. From this we can develop and refine a prototype architecture that is allocated and mapped onto software and hardware components required to deliver the use case.

Prototype & Candidate Architecture

The prototype architecture is made up of building blocks or IP that can be sourced, identifying what IP needs to be developed, how the various compute engines, interfaces and memory systems are connected to one another. Physical constraints such as thermal and packaging requirements are identified.

This is then refined to a candidate architecture that along with the design specification can give us an estimation of die size, NRE costs and project schedule. This is important to determine if the proposed solution can support the customer business case.

Architecture Specification

All being well the next step is to develop this candidate architecture to the next level of detail. That being to create a viable system architecture specification, along with a project proposal that we are confident enough in to generate a formal quotation to the customer. This allows the development project to start, if accepted.

During this architectural specification stage we model and analyse key system behaviours and data flows for each use case and validate that the architecture will behave as intended.

During this architectural specification stage we model and analyse key system behaviours and data flows for each use case and validate that the architecture will behave as intended.

Modelling

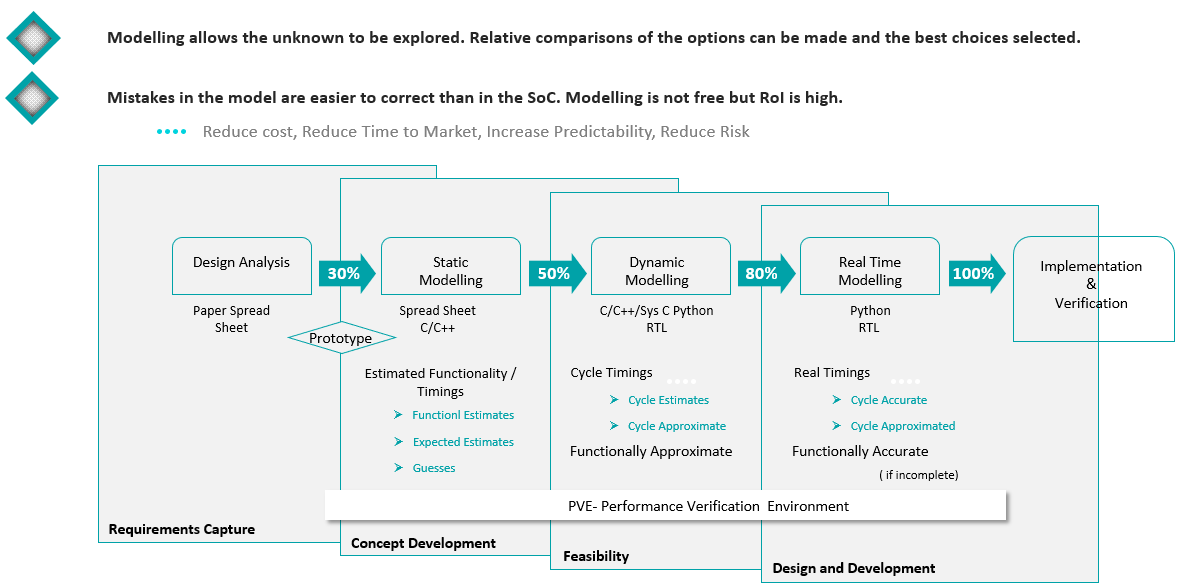

Modelling can range from fairly simple spreadsheet-based calculations to more sophisticated dynamic simulations. Investment in modelling at this stage can be a great way to mitigate risk and accelerate arriving at a proposal that you can have very high confidence in. This is where the reference design approach benefits us as we have an existing modelling environment that we can configure according to the project needs.

In fact, modelling the system is a key tool that we can leverage throughout the development cycle. It’s one of Sondrel’s major value adds, in that we have invested heavily in the developing and supporting this competence.

In fact, modelling the system is a key tool that we can leverage throughout the development cycle. It’s one of Sondrel’s major value adds, in that we have invested heavily in the developing and supporting this competence.

Models allow our architects to quickly explore different options using highly abstracted models that produce results quickly, early in the process.

As the design evolves we trade fast turnarounds for increased accuracy, so in the concept development phase we may be modelling the system statically using spreadsheets or developing some C models to understand how specific algorithms might be implemented.

Development

As we move into development, the models evolve to add even more accuracy, adding realistic cycle timing and traffic profiling that enables us to analyse how data are flowing through the system and utilising the available compute and memory resources. It allows us to design out bottlenecks in the on- chip interconnects that limit performance, and we can add real implementations as they become available during development.

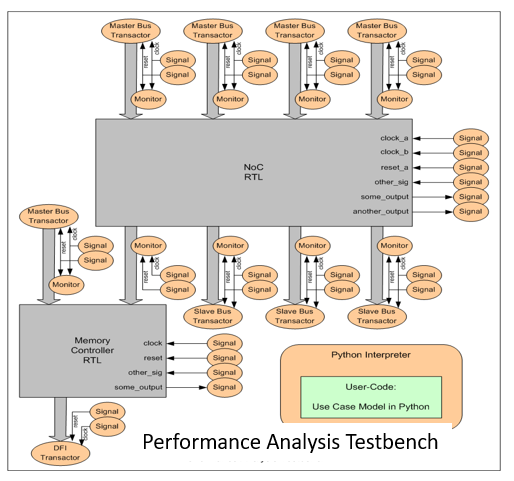

Underpinning this is our proprietary Performance Verification Environment (PVE).

PVE

What does the Performance Verification Environment (PVE) look like?

SoC Example for an Edge Camera Application

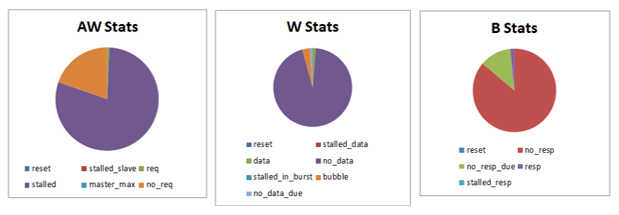

PVE is an environment Sondrel has developed that allows us to analyse the behaviour of the SoC in terms of how the data flows in the device. We need to understand that the SoC infrastructure such as an on-chip interconnect and memory subsystems enables the computing resources and other bus masters in the design to be able to send and receive data as required.

Diagram Relating to a Multicore Heterogeneous SoC Design (16nm)

Very early on we make assumptions about the profile of the data traffic, from the various devices in the chip, to shared resources such as the memory. We have programmable traffic generators than we can configure algorithmically or with historical data sets extracted from similar designs, and we use this to analyse bandwidth and latency statistics to identify any bottlenecks that might negatively affect the ability to satisfy a particular use case.

As the design evolves we can replace the traffic models with more detailed models, then RTL code that we implement the device from. Eventually we can run simulations with representative software and we can accelerate those simulations using emulation technology, so we can run sufficient cycles to stress the system. However, is doesn’t stop there, as we build in debug infrastructure and monitors than allow us to extract the same data from real silicon to validate the design and provide the data sets we can use to develop future devices.

What other tools do we have to deal with complexity?

‘Whilst complexity of the system is greater than the simple sum of the complexity of its components, the converse is also true.’

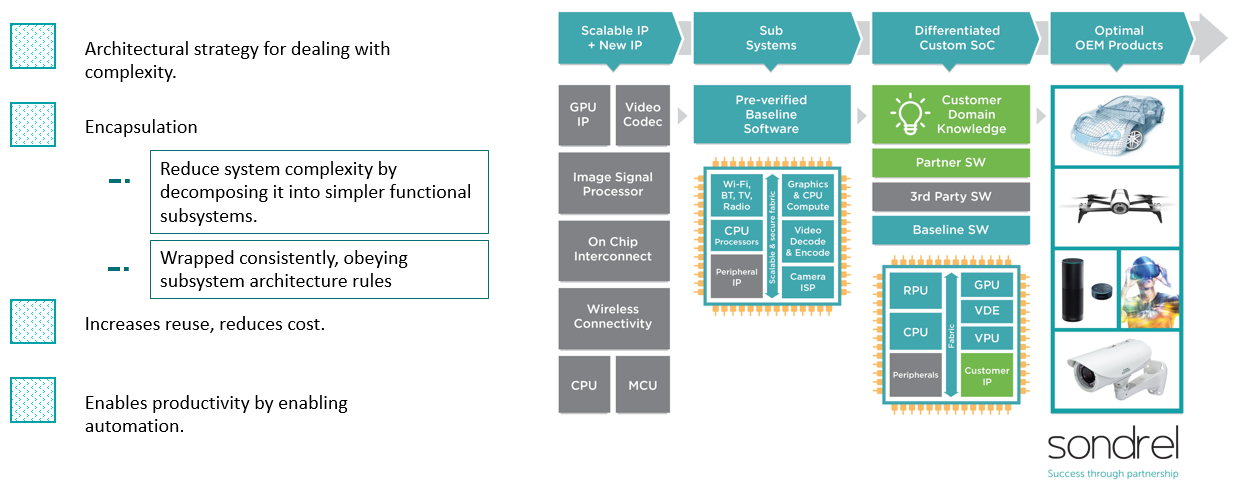

We decompose a SoC into simpler subsystems according to a well-defined set of architectural rules. The subsystems have common characteristics, like clocks and power domains. The subsystems are then wrapped so that at the boundary they again follow specific interface rules. This enables us to implement each of these subsystems independently such that we have a series of reusable building blocks. Changes made within the subsystem ideally do not affect or have limited and understood effects on the integration of these blocks at the top level.

There are many benefits in this approach. It enables us to automate many of the integration steps, boosting productivity, it allows us to maximise reuse of the subsystems, and it allows us to add new subsystems to produce a custom, optimal solution for a customer, at lower cost and of course faster time to market.

In conclusion, there are five takeaways for your consideration when developing a successful system on chip design at the edge.

Key Considerations

- Increased levels of processing are appearing at the network edge.

- These are often complex heterogeneous, multicore systems, E.g. Smart factories, Smart cameras, Healthcare, ADAS / Autonomous vehicles.

- Architecting these SoCs requires a rigorous process to capture and develop use cases and detailed market requirements - “Clear purpose”.

- Advanced techniques are required to validate architectural concepts and ensure designs can be reliably and predictably implemented - Modelling, physically aware “subsystem” approach, performance analysis.

- Reference design approach can significantly accelerate TTM and de-risk product development.

Sondrel’s focus is on digital SoC design, we cover a wide spectrum from wireless connected sensor nodes, through medium complexity devices up to high-end heterogeneous computing devices that support video analytics, AR/VR, blockchain and cryptocurrency mining, ADAS and security monitoring. There is a long history of video processing and graphics in the company and this is highly relevant as many applications now rely on a camera as the sensor.

To have a conversation with our architectural team, email info@sondrel.com, or find telephone and regional contact emails on our ‘contact us’ website page.